Saturday, 16 January 2016

Friday, 31 January 2014

Areamoeba

Just before the holidays, I spent some time on a small project dictated largely by floor areas. We were still in the early stages of design at the time so the team was focused on formal explorations which, for the most part, took the form of blobular figure ground studies. Keeping track of the tight area constraints while shuffling control points proved to be a frustrating ordeal for many so I cooked up a little form finding tool to help out.

What you're looking at in the video above can be thought of as an area seeking polygon. A handful of physical forces act along its perimeter which allow it to autonomously pursue a target area while being pushed, pulled, and pinned by the designer. Each vertex is subject to axial spring forces, bending forces, and inflation forces which are calculated relative to its neighbours. Depending on the difference between the polygon's target area and its current area at each step, parameters associated with these forces (rest length, strength etc.) are adjusted causing the perimeter to either shrink or grow incrementally until the target area is reached.

While we've moved on with the project this tool was developed for, I'm still interested in pushing it further when time allows. In particular, there's no reason why multiple area seeking polygons couldn't be nested within each other for more complex spatial organizations.

For now, here's a download link to the processing sketch. There aren't any dependencies so it should work in Processing 2.0 straight out of the box.

For now, here's a download link to the processing sketch. There aren't any dependencies so it should work in Processing 2.0 straight out of the box.

Platforms: Processing

Sunday, 6 October 2013

Pack Attack

So I was browsing the Rhinocommon SDK help file one day at work and I stumbled on to the RTree class. I'm not sure how I hadn't noticed it before since it's intuitively nestled next to a handful of other classes I use on a regular basis.

Like other spatial tree data structures, this not-so-hidden gem is used to optimize spatial queries by quickly ruling out large portions of the search space. More importantly, the Rhinocommon implementation is flexible and super straightforward to use. Simply insert objects into the tree, search the tree with a callback method of your choice, and go spend the several hundred milliseconds you just saved with your loved ones. Here's an example in Grasshopper where it's used to speed up the removal of duplicate points. If you turn on the profiler widget you'll see how it stacks up against a brute force approach.

Anyways, this discovery led to several adaptive packing experiments shown below. Here the RTree class is being used to manage collisions among a dynamic population of spheres which are attracted to an input surface (nurbs in the first video implicit in the second two). A new sphere is added to the population if the average pressure felt by the existing spheres falls below a given threshold. Similarly if the average pressure exceeds a second higher threshold a sphere is removed. The RTree callback method used deals with both the calculation/application of repulsion forces and the recording of pressures across the system.

In each case, the result is a near uniform distribution of spheres across the given surface. While you could run the same process without the use of a spatial tree, things could get out of hand pretty quickly since the algorithm is essentially modifying its own input size each iteration. The RTree just ensures that the execution time scales more reasonably in proportion to this input size - preventing the whole thing from going up in smoke.

Platforms: Rhino, Grasshopper, C#

Like other spatial tree data structures, this not-so-hidden gem is used to optimize spatial queries by quickly ruling out large portions of the search space. More importantly, the Rhinocommon implementation is flexible and super straightforward to use. Simply insert objects into the tree, search the tree with a callback method of your choice, and go spend the several hundred milliseconds you just saved with your loved ones. Here's an example in Grasshopper where it's used to speed up the removal of duplicate points. If you turn on the profiler widget you'll see how it stacks up against a brute force approach.

Anyways, this discovery led to several adaptive packing experiments shown below. Here the RTree class is being used to manage collisions among a dynamic population of spheres which are attracted to an input surface (nurbs in the first video implicit in the second two). A new sphere is added to the population if the average pressure felt by the existing spheres falls below a given threshold. Similarly if the average pressure exceeds a second higher threshold a sphere is removed. The RTree callback method used deals with both the calculation/application of repulsion forces and the recording of pressures across the system.

In each case, the result is a near uniform distribution of spheres across the given surface. While you could run the same process without the use of a spatial tree, things could get out of hand pretty quickly since the algorithm is essentially modifying its own input size each iteration. The RTree just ensures that the execution time scales more reasonably in proportion to this input size - preventing the whole thing from going up in smoke.

Platforms: Rhino, Grasshopper, C#

Thursday, 5 September 2013

Stigmergic Particles

I've been getting a few questions on some recent work with indirect particle interaction so I figured I'd try to address them all in one fell swoop via blog post. Here goes.

Typically interactions among a population of particles occur directly. Particles refer to each other's properties (position, velocity, acceleration etc.) in order to build the forces which define their behaviour.

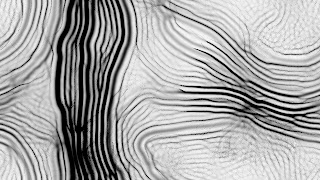

In both examples below, particles aren't particularly concerned with each other. Rather they look to a shared environment in deciding how to act. This environment takes the form of a 2d scalar field where values represent concentration of a diffusing communication medium or "pheromone". Particles build forces by locally sampling this field relative to their own positions. Depending on how they go about reading their environment, different collective patterns emerge over time. In fact this is the only significant difference between the following two systems.

In the first example, each particle samples the scalar field at regularly spaced points on a circle centered at its position. A force vector is calculated by summing the gradient vectors found at each sample point before being applied to the particle determining it's future position. The radius of the sample circle is being changed throughout the video causing the system to shift between a range of patterns.

The particles in the second example are less thorough in how they read the field. They only cast two sample points in front of themselves - one to the left and one to the right. The forward biasing of these points results in a completely different family of patterns than seen in the first example. More information on the inner workings of this system can be found here.

Particles can't successfully interact through a read-only environment however. Not much can happen if everyone is listening and no one is talking. In order to close the communicative feedback loop, particles must also write to their shared environment. This occurs through pheromone deposition whereby particles modify local values of the scalar field by either pulling them towards a target value or adding/removing a constant. Diffusion then helps these modifications propagate through the environment so others can take notice.

With both read and write mechanisms in place, particles are able to detect and react to each other's signals producing a variety of emergent patterns. Here the goal is simply the production of visual complexity. As mentioned in previous posts, however, I've been busy applying similar principles of indirect interaction among a population of autonomous entities to solving architectural design problems - specifically those relating to programmatic organization.

Platforms: Java, Processing

Typically interactions among a population of particles occur directly. Particles refer to each other's properties (position, velocity, acceleration etc.) in order to build the forces which define their behaviour.

In both examples below, particles aren't particularly concerned with each other. Rather they look to a shared environment in deciding how to act. This environment takes the form of a 2d scalar field where values represent concentration of a diffusing communication medium or "pheromone". Particles build forces by locally sampling this field relative to their own positions. Depending on how they go about reading their environment, different collective patterns emerge over time. In fact this is the only significant difference between the following two systems.

Particles can't successfully interact through a read-only environment however. Not much can happen if everyone is listening and no one is talking. In order to close the communicative feedback loop, particles must also write to their shared environment. This occurs through pheromone deposition whereby particles modify local values of the scalar field by either pulling them towards a target value or adding/removing a constant. Diffusion then helps these modifications propagate through the environment so others can take notice.

With both read and write mechanisms in place, particles are able to detect and react to each other's signals producing a variety of emergent patterns. Here the goal is simply the production of visual complexity. As mentioned in previous posts, however, I've been busy applying similar principles of indirect interaction among a population of autonomous entities to solving architectural design problems - specifically those relating to programmatic organization.

Platforms: Java, Processing

Saturday, 29 June 2013

Smart Geometry

Just a quick (and tardy) post showing some output from the Volatile Territories cluster at Smart Geometry 2013. Below is a look at the multi-agent space adjacency software sorting out a (much simplified) brief for a mixed-use high rise. Agents were restricted to a predefined building envelope with the core, front lobby, and roof garden being fixed from the get go.

In cases where the brief becomes sufficiently complex like the one above real-time user interaction starts to play a key role in getting the system to settle properly. By temporarily reducing the floor area that each programmatic element is trying to occupy, agents contract providing the necessary wiggle room for less satisfied members of the population to explore other neighborhoods. Hopefully I'll be able to integrate this conditional contraction into the agent behavior itself with a bit more tinkering.

Also I'm happy to say we were able to 3d print with all the colours of the wind. Hats off to the Bartlett. Have a look below.

In cases where the brief becomes sufficiently complex like the one above real-time user interaction starts to play a key role in getting the system to settle properly. By temporarily reducing the floor area that each programmatic element is trying to occupy, agents contract providing the necessary wiggle room for less satisfied members of the population to explore other neighborhoods. Hopefully I'll be able to integrate this conditional contraction into the agent behavior itself with a bit more tinkering.

Also I'm happy to say we were able to 3d print with all the colours of the wind. Hats off to the Bartlett. Have a look below.

Subscribe to:

Posts (Atom)